Home | Call for Papers | Program | Venue | Demonstrations | Committee | Sponsorship

Registration and Accommodation

The three (+1) day conference programme (26-29 January, 2014) includes oral and poster sessions to be held at the Barbican Centre in a convenient central London location, social events, and a technical tour at the BBC in London (participant numbers will be limited and subject to registration on a first come first served basis). A tutorial day (26 January, 2014) will be held at Queen Mary University of London which will be free to attend for all delegates.

Following these links, you will find the detailed tutorial programme and the technical conference programme.

Information for poster and demo presenters can be found on this page.

Papers and proceedings are available from the AES E-library.

The programme highlights 3 keynote and 4 invited talks from world leading researchers in the field of Semantic Audio as well as from industry. Please see the abstracts of the talks and a short bio of the speakers using the links below.

Meinard Müller (International Audio Laboratories Erlangen, Germany)

Gaël Richard (TELECOM ParisTech and CNRS, France)

Gerhard Widmer (Department of Computational Perception, Johannes Kepler University, Linz, Austria)

Tuomas Eerola (Department of Music, Durham University, UK)

Yves Raimond (BBC R&D, London, UK)

Xavier Serra (Music Technology Group, Universitat Pompeu Fabra, Barcelona, Spain)

Jay LeBoeuf (Strategic Technology Director, iZotope Inc., USA)

Up-to-date list of the invited talks, and more details of the main conference programme will be continuously published here.

A technical tour will take place at the new BBC Broadcasting House on Wednesday 29 January, (from 3pm-5pm approximately). The tour is free for conference participants but you will need to register upon arrival.

Broadcasting House is the new state of the art multimedia broadcasting centre in the heart of London. This world-class facility hosts several radio and television networks, including BBC Radio 1 and BBC World Service, hosts some 6000 staff and serves a world wide audience of 241 million people. This is the iconic new home for the BBC’s network and global services in Television, Radio, News and Online. New Broadcasting House heralds a simpler, more integrated digital service for audiences, and a simpler, more creative environment for staff.

The tour will provide an introduction to several live media production as well as post production facilities and provide an overview of the productiuon and broadcast workflows within the BBC. Participation is free but places will be limited to 40 delegates available on a fist come first served basis. Groups will be taken from the conference venue, however, if you need to travel on your own, please find travel infrmation to BBC Broadcasting House on the venues page.

Two special sessions are organised on "Semantic Audio Organization and Retrieval – Integrating User and Audio Information" chaired by Jan Larsen and Mark Plumbley, and "Intelligent Audio Production" chaired by Josh Reiss and Bryan Pardo. Special session papers are fully peer-reviewed and form parts of the conference proceedings.

Semantic Audio Organization and Retrieval – Integrating User and Audio Information

Higher-level semantic representations are essential for organization, search, retrieval, and discovery of audio and music. In order to mitigate semantic ambiguity and ensure an actionable representation, the formulation and implementation of an integrated framework is required. The framework should ideally consider all available information concerning the content and context of audio objects, as well as information about users’ context, demographics, usage activity, content description, such as e.g. tags or scores. The objective of the special session is to address current modelling, retrieval and interfacing trends from experts in the field.

Special Session on Intelligent Audio Production

The aim of this session is to bring together, for the first time, the disparate community working in this field. The session will consider ways in which semantic information can be used to create intelligent systems that are capable of performing audio production tasks which are typically done manually by a sound engineer. Particular attention is paid to the psychoacoustics and knowledge engineering needed to devise such systems. Different signal processing and machine learning approaches will be considered, as well as intelligent user interface designs that enable their use. The state of the art and future directions for intelligent audio production will also be discussed.

An additional tutorial day (26 January, 2014) will be held at Queen Mary University of London on effective research practices (e.g. the use of version control and unit testing in audio research), Intelligent audio production and automatic music mixing.

Venue: Queen Mary University (Mile End campus) Engineering Building, MAT Lab (please look for the glass door entrance of the Engineering Building on Mile End road)

Tutorial 1: "Reusable software and reproducibility in music research"

by Chris Cannam, Mark Plumbley, SoundSoftware.ac.uk

The need to develop and reuse software to process data is almost universal in audio and music research. Many methods are developed in tandem with software implementations, and some of them are too complex or too fundamentally software-based to be reproduced readily from a published paper alone. For this reason, it is helpful for sustainable research to have software and data published along with papers. In practice, non-publication of code and data is still the norm and research software is commonly lost in the years following publication of the associated methods.

The tutorial will rapidly cover the use of version control software, code hosting facilities, sharing and review of code within a team, aspects of testing and provenance, and software licensing for publication. The focus will be on using widely-available free tools such as Python, Mercurial and BitBucket. This tutorial will be of immediate practical interest to researchers within the community, and will also be highly relevant to research supervisors and research group leaders with an interest in policy and guidance.

Tutorial 2: "Semantic Audio and Music Production"

by Michael Terrell, Lasse Vetter, Mix Elephant, London, UK

The range of applications for semantic audio technology has grown rapidly in recent years, particularly in the fields of music production and consumption. Meta data is used to tag and categorise content (e.g. genre, emotion), it is used select music and so automatically generate playlists, and more recently has been used to do music production tasks, i.e. to mix multitrack music projects. The difference with music production applications, and in particular mixing, is the fact that we are not simply extracting meta data from the audio, but are processing the audio to change the meta data features of the mix to adhere to predefined objectives. There are two key aspects to this work, i) the development of meta-data “features” that describe the objectives of music production tasks, and ii) a means to manipulate the control parameters on the mixing device to realise these objectives. This tutorial will provide an overview of the state of the art in this field, which is more commonly referred to as “automatic mixing”. It will discuss current features and models that are used to describe music production tasks, and will give an overview of psychophysical methods that can be used to generate new features. Furthermore, via the use of practical examples, an introduction to numerical optimisation - a critical tool when mapping features to mixing controls - will be provided.

The conference will provide a great opportunity to network and meet other researchers as well as delegates from industry. Our social programmes will provide the right athmosphere to relax or to facilitate discussion and include (optional) visits to London Pubs.

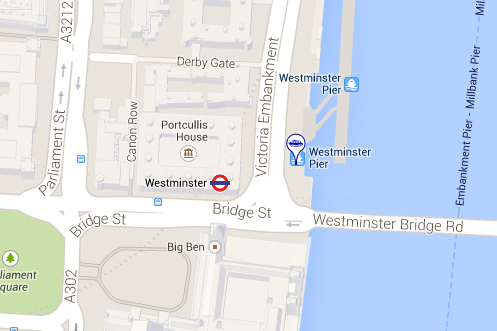

The Gala Dinner will take place on Tuesday evening (28 January) from approximately 18.00-22.00. Venue: Elizabethan River Boat. Meeting Point: Westminster Pier.

Please be at the Westminster Pier before 6PM. The Boat MUST leave on time due to restricted pier allocation slot. Those who are late will unfortunately miss the dinner.

Travel Information:

Closest Underground: Westminster tube station

The pier is located left from Westminster Bridge (please do not cross the bridge to the south side). Meet and the Pier entrance on Victoria Embankment.

Map: