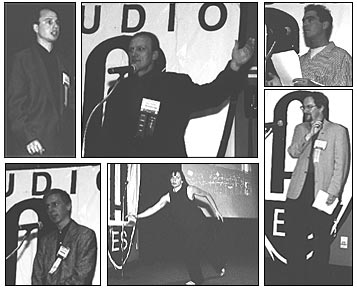

Demo participants: clockwise from top left, Jeremy Cooperstock, Wieslaw Woszczyk, and Zack Settel of McGill University; Peter Marshall, Canarie Inc.; NYU dancer; and Robert Rowe, New York University.

On 1999 September 26 at the AES 107th Convention in New York, members of the Society's Technical Committee on Network Audio Systems demonstrated the first-ever real-time transmission of DVD-quality, multichannel audio over the Internet.

A live performance by the McGill University Swing Band, playing in Montreal, Canada, was streamed via a 5.1-channel audio signal and a simultaneous video feed to a theater in the Cantor Film Center of New York University. The audience experienced the same high-quality surround sound and video provided by movie theaters. An NYU student danced in real time on stage to the music of the band.

The 5.1-channel sound mix was prepared at McGill as a 48-kHz, 16-bit program, which was then Dolby Digital encoded using an off-the-shelf Dolby DP569 encoder unit at 640 kbps. The Dolby Digital bitstream was encapsulated for interconnectivity purposes in an AES/EBU stream at 1.5 Mbps, which was then sent over the high-speed Internet link. Video was transmitted at about the same data rate using Cisco's IP/TV system with MPEG-1 compression. At NYU, the audio signal was decoded from Dolby Digital back to PCM using Dolby's DP562 decoder unit.

Four transmissions were viewed during the demonstration. The first employed a 23-second buffer to accomodate possible network congestion, but the final three used a far less conservative 3-second buffer.

Although the data traveled from Montreal to New York over the high-speed Canarie CA-Net (Canada) and Internet2 (USA) backbones, no guarantees on available bandwidth could be provided and no special tuning of the links was done. The only concession made by the network administrators was that the incoming Usenet news feeds at both universities were disabled during the first part of the demonstration. During the final performance, the network administrators turned on the news feed coming into the New York University computer network, subjecting the demonstration to intense competition for bandwidth.

Despite occasional packet loss, the error recovery mechanism built into the transmission software was able to sustain an uninterrupted stream of Dolby Digital audio throughout the duration of the demo. On three occasions during the final performance, the video froze briefly but quickly recovered.

The underlying software used in the demonstration was developed at McGill University by a team led by Jeremy Cooperstock, a professor in the Department of Electrical and Computer Engineering at McGill. According to Professor Wieslaw Woszczyk, director of the McGill graduate program in sound recording and chair of the AES Technical Council, "this technology opens the way for people in entertainment, business, education, or research to collaborate live online. It will be much more appealing than the current teleconferencing telephone model because it will offer an experience more like a movie theater. For collaborative musical performances and compositions over the Internet, it will be like a virtual classroom."

J. Audio Eng. Soc., Vol. 47, No. 11, 1999 November, page 1022