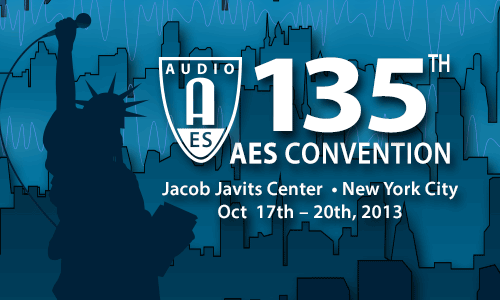

AES New York 2013

Recording & Production Track Event Details

Wednesday, October 16, 5:00 pm — 7:00 pm (Room 1E10)

Workshop: W21 - Lies, Damn Lies, and Audio Gear Specs

Chair:Ethan Winer, RealTraps - New Milford, CT, USA

Panelists:

Scott Dorsey, Williamsburg, VA, USA

David Moran, Boston Audio Society - Wayland, MA, USA

Mike Rivers, Gypsy Studio - Falls Church, VA, USA

Abstract:

The fidelity of audio devices is easily measured, yet vendors and magazine reviewers often omit important details. For example, a loudspeaker review will state the size of the woofer but not the low frequency cut-off. Or the cut-off frequency is given, but without stating how many dB down or the rate at which the response rolls off below that frequency. Or it will state distortion for the power amps in a powered monitor but not the distortion of the speakers themselves, which of course is what really matters. This workshop therefore defines a list of standards that manufacturers and reviewers should follow when describing the fidelity of audio products. It will also explain why measurements are a better way to assess fidelity than listening alone.

Excerpts from this workshop are available on YouTube.

Thursday, October 17, 9:00 am — 11:00 am (Room 1E12)

Live Sound Seminar: LS1 - AC Power and Grounding

Chair:Bruce C. Olson, Olson Sound Design - Brooklyn Park, MN, USA; Ahnert Feistel Media Group - Berlin, Germany

Panelist:

Bill Whitlock, Jensen Transformers, Inc. - Chatsworth, CA, USA; Whitlock Consulting - Oxnard, CA, USA

Abstract:

There is a lot of misinformation about what is needed for AC power for events. Much of it has to do with life-threatening advice. This panel will discuss how to provide AC power properly and safely and without causing noise problems. This session will cover power for small to large systems, from a couple boxes on sticks up to multiple stages in ballrooms, road houses, and event centers; large scale installed systems, including multiple transformers and company switches, service types, generator sets, 1ph, 3ph, 240/120 208/120. Get the latest information on grounding and typical configurations by this panel of industry veterans.

Thursday, October 17, 9:00 am — 10:30 am (Room 1E13)

Tutorial: T1 - FXpertise: Compression

Presenter:Alex Case, University of Massachusetts Lowell - Lowell, MA, USA

Abstract:

Compressors were invented to control dynamic range. The next day, engineers started doing so much more—increasing loudness, improving intelligibility, adding distortion, extracting ambience, and, most importantly, reshaping timbre. This diversity of signal processing possibilities is realized only indirectly, by choosing the right compressor for the job and coaxing the parameters of ratio, threshold, attack, and release into place. Learn when to reach for compression, know a good starting place for compressor settings, and advance your understanding of what to listen for and which way to tweak.

Thursday, October 17, 9:00 am — 11:00 am (Room 1E07)

Paper Session: P1 - Transducers—Part 1: Microphones

Chair:

Helmut Wittek, SCHOEPS GmbH - Karlsruhe, Germany

P1-1 Portable Spherical Microphone for Super Hi-Vision 22.2 Multichannel Audio—Kazuho Ono, NHK Engineering System, Inc. - Setagaya-ku, Tokyo, Japan; Toshiyuki Nishiguchi, NHK Science & Technology Research Laboratories - Setagaya, Tokyo, Japan; Kentaro Matsui, NHK Science & Technology Research Laboratories - Setagaya, Tokyo, Japan; Kimio Hamasaki, NHK Science & Technology Research Laboratories - Setagaya, Tokyo, Japan

NHK has been developing a portable microphone for the simultaneous recording of 22.2ch multichannel audio. The microphone is 45 cm in diameter and has acoustic baffles that partition the sphere into angular segments, in each of which an omnidirectional microphone element is mounted. Owing to the effect of the baffles, each segment works as a narrow angle directivity and a constant beam width in higher frequencies above 6 kHz. The directivity becomes wider as frequency decreases and that it becomes almost omnidirectional below 500 Hz. The authors also developed a signal processing method that improves the directivity below 800 Hz.

Convention Paper 8922 (Purchase now)

P1-2 Sound Field Visualization Using Optical Wave Microphone Coupled with Computerized Tomography—Toshiyuki Nakamiya, Tokai University - Kumamoto, Japan; Fumiaki Mitsugi, Kumamoto University - Kumamoto, Japan; Yoichiro Iwasaki, Tokai University - Kumamoto, Japan; Tomoaki Ikegami, Kumamoto University - Kumamoto, Japan; Ryoichi Tsuda, Tokai University - Kumamoto, Japan; Yoshito Sonoda, Tokai University - Kumamoto, Kumamoto, Japan

The novel method, which we call the “Optical Wave Microphone (OWM)” technique, is based on a Fraunhofer diffraction effect between a sound wave and a laser beam. The light diffraction technique is an effective sensing method to detect the sound and is flexible for practical uses as it involves only a simple optical lens system. OWM is also very useful to detect the sound wave without disturbing the sound field. This new method can realize high accuracy measurement of slight density change of atmosphere. Moreover, OWM can be used for sound field visualization by computerized tomography (CT) because the ultra-small modulation by the sound field is integrated along the laser beam path.

Convention Paper 8923 (Purchase now)

P1-3 Proposal of Optical Wave Microphone and Physical Mechanism of Sound Detection—Yoshito Sonoda, Tokai University - Kumamoto, Kumamoto, Japan; Toshiyuki Nakamiya, Tokai University - Kumamoto, Japan

An optical wave microphone with no diaphragm, which uses wave optics and a laser beam to detect sounds, can measure sounds without disturbing the sound field. The theoretical equation for this measurement can be derived from the optical diffraction integration equation coupled to the optical phase modulation theory, but the physical interpretation or meaning of this phenomenon is not clear from the mathematical calculation process alone. In this paper the physical meaning in relation to wave-optical processes is considered. Furthermore, the spatial sampling theorem is applied to the interaction between a laser beam with a small radius and a sound wave with a long wavelength, showing that the wavenumber resolution is lost in this case, and the spatial position of the maximum intensity peak of the optical diffraction pattern generated by a sound wave is independent of the sound frequency. This property can be used to detect complex tones composed of different frequencies with a single photo-detector. Finally, the method is compared with the conventional Raman-Nath diffraction phenomena relating to ultrasonic waves.

AES 135th Convention Best Peer-Reviewed Paper Award Cowinner

Convention Paper 8924 (Purchase now)

P1-4 Numerical Simulation of Microphone Wind Noise, Part 2: Internal Flow—Juha Backman, Nokia Corporation - Espoo, Finland

This paper discusses the use of the computational fluid dynamics (CFD) for computational analysis of microphone wind noise. The previous part of this work showed that an external flow produces a pressure difference on the external boundary, and this pressure causes flow in the microphone internal structures, mainly between the protective grid and the diaphragm. The examples presented in this work describe the effect of microphone grille structure and microphone diaphragm properties on the wind noise sensitivity related to the behavior of this kind of internal flows.

Convention Paper 8925 (Purchase now)

Thursday, October 17, 9:00 am — 12:00 pm (Room 1E09)

Paper Session: P2 - Signal Processing—Part 1

Chair:

Jaeyong Cho, Samsung Electronics DMC R&D Center - Suwon, Korea

P2-1 Linear Phase Implementation in Loudspeaker Systems: Measurements, Processing Methods, and Application Benefits—Rémi Vaucher, NEXO - Plailly, France

The aim of this paper is to present a new generation of EQ. It provides a way to ensure phase compatibility from 20 Hz to 20 kHz over a range of different speaker cabinets. This method is based on a mix of FIR filters and IIR filters. The use of FIR filters allows a tuning of the phase independently from magnitude and allows an acoustic linear phase above 500 Hz. All targets used to compute FIR coefficient are based upon extensive measurement and subjective listening tests. A template has been set to normalize the crossover frequencies in the low range, enabling compatibility of every sub-bass with the main cabinets.

Convention Paper 8926 (Purchase now)

P2-2 Applications of Inverse Filtering to the Optimization of Professional Loudspeaker Systems—Daniele Ponteggia, Studio Ponteggia - Terni (TR), Italy; Mario Di Cola, Audio Labs Systems - Casoli (CH), Italy

The application of FIR filter technology to implement Inverse Filtering into a Professional Loudspeakers Systems nowadays is easier and more affordable because of the latest development of DSP technology and also because of the existence of a new DSP platform dedicated to the end user. This paper presents an analysis, based on real world examples, of a possible methodology that can be used in order to synthesize an appropriate Inverse Filter both to process a single driver, from a Time Domain perspective in a multi-way system, and to process the output pass-band from of a multi-way system for phase linearization. The analysis and discussion of results for some applications will be shown through real world test and measurements.

Convention Paper 8927 (Purchase now)

P2-3 Live Event Performer Tracking for Digital Console Automation Using Industry-Standard Wireless Microphone Systems—Adam J. Hill, University of Derby - Derby, Derbyshire, UK; Kristian "Kit" Lane, University of Derby - Derby, UK; Adam P. Rosenthal, Gand Concert Sound - Elk Grove Village, IL, USA; Gary Gand, Gand Concert Sound - Elk Grove Village, IL, USA

The ever-increasing popularity of digital consoles for audio and lighting at live events provides a greatly expanded set of possibilities regarding automation. This research works toward a solution for performer tracking using wireless microphone signals that operates within the existing infrastructure at professional events. Principles of navigation technology such as received signal strength (RSS), time difference of arrival (TDOA), angle of arrival (AOA), and frequency difference of arrival (FDOA) are investigated to determine their suitability and practicality for use in such applications. Analysis of potential systems indicates that performer tracking is feasible over the width and depth of a stage using only two antennas with a suitable configuration, but limitations of current technology restrict the practicality of such a system.

Convention Paper 8928 (Purchase now)

P2-4 Real-Time Simulation of a Family of Fractional-Order Low-Pass Filters—Thomas Hélie, IRCAM-CNRS UMR 9912-UPMC - Paris, France

This paper presents a family of low-pass filters, the attenuation of which can be continuously adjusted from 0 decibel per octave (filter is a unit gain) to -6 decibels per octave (standard one-pole filter). This continuum is produced through a filter of fractional-order between 0 (unit gain) and 1 (one-pole filter). Such a filter proves to be a (continuous) infinite combination of one-pole filters. Efficient approximations are proposed from which simulations in the time-domain are built.

Convention Paper 8929 (Purchase now)

P2-5 A Computationally Efficient Behavioral Model of the Nonlinear Devices—Jaeyong Cho, Samsung Electronics DMC R&D Center - Suwon, Korea; Hanki Kim, Samsung Electronics DMC R&D Center - Suwon, Korea; Seungkwan Yu, Samsung Electronics DMC R&D Center - Suwon, Korea; Haekwang Park, Samsung Electronics DMC R&D Center - Suwon, Korea; Youngoo Yang, Sungkyunkwan University - Suwon, Korea

This paper presents a new computationally efficient behavioral model to reproduce the output signal of the nonlinear devices for the real-time systems. The proposed model is designed using the memory gain structure and verified for its accuracy and computational complexity compared to other nonlinear models. The model parameters are extracted from a vacuum tube amplifier, Heathkit’s W-5M, using the exponentially-swept sinusoidal signal. The experimental results show that the proposed model has 27% of the computational load against the generalized Hammerstein model and maintains similar modeling accuracy.

Convention Paper 8930 (Purchase now)

P2-6 High-Precision Score-Based Audio Indexing Using Hierarchical Dynamic Time Warping—Xiang Zhou, Bose Corporation - Framingham, MA, USA; Fangyu Ke, University of Rochester - Rochester, NY, USA; Cheng Shu, University of Rochester - Rochester, NY, USA; Gang Ren, University of Rochester - Rochester, NY, USA; Mark F. Bocko, University of Rochester - Rochester, NY, USA

We propose a novel audio signal processing algorithm of high-precision score-based audio indexing that accurately maps a music score with its corresponding audio. Specifically we improve the time precision of existing score-audio alignment algorithms to find the accurate positions of audio onsets and offsets. We achieve higher time precision by (1) improving the resolution of alignment sequences, and (2) admitting a hierarchy of spectrographic analysis results as audio alignment features. The performance of our proposed algorithm is testified by comparing the segmentation results with manually composed reference datasets. Our proposed algorithm achieves robust alignment results and enhanced segmentation accuracy and thus is suitable for audio engineering applications such as automatic music production and human-media interactions.

Convention Paper 8931 (Purchase now)

Thursday, October 17, 10:30 am — 12:00 pm (Room 1E13)

Workshop: W2 - FX Design Panel: Compression

Chair:Alex Case, University of Massachusetts Lowell - Lowell, MA, USA

Panelists:

David Derr, Empirical Labs - Parsippany, NJ, USA

Dave Hill, Crane Song - Superior, WI, USA; Dave Hill Designs

Colin McDowell, McDSP - Sunnyvale, CA, USA

Abstract:

Meet the designers whose talents and philosophies are reflected in the products they create, driving sound quality, ease of use, reliability, price, and all the other attributes that motivate us to patch, click, and tweak their effects processors.

Thursday, October 17, 11:00 am — 1:00 pm (Room 1E12)

Workshop: W4 - Microphone Specifications—Believe it or Not

Chair:Eddy B. Brixen, EBB-consult/DPA Microphones - Smorum, Denmark

Panelists:

Juergen Breitlow, Neumann - Berlin, Germany

Jackie Green, Audio-Technica U.S., Inc. - Stow, OH, USA

Bill Whitlock, Jensen Transformers, Inc. - Chatsworth, CA, USA; Whitlock Consulting - Oxnard, CA, USA

Helmut Wittek, SCHOEPS GmbH - Karlsruhe, Germany

Joerg Wuttke, Joerg Wuttke Consultancy - Pfinztal, Germany

Abstract:

There are lots and lots of microphones available to the audio engineer. The final choice is often made on the basis of experience or perhaps just habits. (Sometimes the mic is chosen because of the looks … .) Nevertheless, there is essential and very useful information to be found in the microphone specifications. This workshop will present the most important microphone specs and provide the attendees with up-to-date information on how these are obtained and understood. Each member of the panel—all related to industry top brands—will present one item from the spec sheet. The workshop takes a critical look on how specs are presented to the user, what to look for and what to expect. The workshop is organized by the AES Technical Committee on Microphones and Applications.

| This session is presented in association with the AES Technical Committee on Microphones and Applications |

Thursday, October 17, 2:15 pm — 3:45 pm (Room 1E08)

Broadcast and Streaming Media: B3 - Listener Fatigue and Retention

Chair:Richard Burden, Richard W. Burden Associates - Canoga Park, CA, USA

Panelists:

Frank Foti, Telos - New York, NY, USA

Greg Ogonowski, Orban - San Leandro, CA, USA

Sean Olive, Harman International - Northridge, CA, USA

Robert Reams, Psyx Research

Elliot Scheiner

Abstract:

This panel will discuss listener fatigue and its impact on listener retention. While listener fatigue is an issue of interest to broadcasters, it is also an issue of interest to telecommunications

service providers, consumer electronics manufacturers, music producers, and others. Fatigued listeners to a broadcast program may tune out, while fatigued listeners to a cell phone conversation may switch to another carrier, and fatigued listeners to a portable media player may purchase another company’s product. The experts on this panel will discuss their research and experiences with listener fatigue and its impact on listener retention.

Thursday, October 17, 2:30 pm — 4:30 pm (Room 1E07)

Paper Session: P4 - Room Acoustics

Chair:

Ben Kok, SCENA acoustic consultants - Uden, The Netherlands

P4-1 Investigating Auditory Room Size Perception with Autophonic Stimuli—Manuj Yadav, University of Sydney - Sydney, NSW, Australia; Densil A. Cabrera, University of Sydney - Sydney, NSW, Australia; Luis Miranda, University of Sydney - Sydney, NSW, Australia; William L. Martens, University of Sydney - Sydney, NSW, Australia; Doheon Lee, University of Sydney - Sydney, NSW, Australia; Ralph Collins, University of Sydney - Sydney, NSW, Australia

Although looking at a room gives a visual indicator of its “size,” auditory stimuli alone can also provide an appreciation of room size. This paper investigates such aurally perceived room size by allowing listeners to hear the sound of their own voice in real-time through two modes: natural conduction and auralization. The auralization process involved convolution of the talking-listener’s voice with an oral-binaural room impulse response (OBRIR; some from actual rooms, and others manipulated), which was output through head-worn ear-loudspeakers, and thus augmented natural conduction with simulated room reflections. This method allowed talking-listeners to rate room size without additional information about the rooms. The subjective ratings were analyzed against relevant physical acoustic measures derived from OBRIRs. The results indicate an overall strong effect of reverberation time on the room size judgments, expressed as a power function, although energy measures were also important in some cases.

Convention Paper 8934 (Purchase now)

P4-2 Digitally Steered Columns: Comparison of Different Products by Measurement and Simulation—Stefan Feistel, AFMG Technologies GmbH - Berlin, Germany; Anselm Goertz, Institut für Akustik und Audiotechnik (IFAA) - Herzogenrath, Germany

Digitally steered loudspeaker columns have become the predominant means to achieve satisfying speech intelligibility in acoustically challenging spaces. This work compares the performance of several commercially available array loudspeakers in a medium-size, reverberant church. Speech intelligibility as well as other acoustic quantities are compared on the basis of extensive measurements and computer simulations. The results show that formally different loudspeaker products provide very similar transmission quality. Also, measurement and modeling results match accurately within the uncertainty limits.

Convention Paper 8935 (Purchase now)

P4-3 A Concentric Compact Spherical Microphone and Loudspeaker Array for Acoustical Measurements—Luis Miranda, University of Sydney - Sydney, NSW, Australia; Densil A. Cabrera, University of Sydney - Sydney, NSW, Australia; Ken Stewart, University of Sydney - Sydney, NSW, Australia

Several commonly used descriptors of acoustical conditions in auditoria (ISO 3382-1) utilize omnidirectional transducers for their measurements, disregarding the directional properties of the source and the direction of arrival of reflections. This situation is further complicated when the source and the receiver are collocated as would be the case for the acoustical characterization of stages as experienced by musicians. A potential solution to this problem could be a concentric compact microphone and loudspeaker array, capable of synthesizing source and receiver spatial patterns. The construction of a concentric microphone and loudspeaker spherical array is presented in this paper. Such a transducer could be used to analyze the acoustic characteristics of stages for singers, while preserving the directional characteristics of the source, acquiring spatial information of reflections and preserving the spatial relationship between source and receiver. Finally, its theoretical response and optimal frequency range are explored.

Convention Paper 8936 (Purchase now)

P4-4 Adapting Loudspeaker Array Radiation to the Venue Using Numerical Optimization of FIR Filters—Stefan Feistel, AFMG Technologies GmbH - Berlin, Germany; Mario Sempf, AFMG Technologies GmbH - Berlin, Germany; Kilian Köhler, IBS Audio - Berlin, Germany; Holger Schmalle, AFMG Technologies GmbH - Berlin, Germany

Over the last two decades loudspeaker arrays have been employed increasingly for sound reinforcement. Their high output power and focusing ability facilitate extensive control capabilities as well as extraordinary performance. Based on acoustic simulation, numerical optimization of the array configuration, particularly of FIR filters, adds a new level of flexibility. Radiation characteristics can be established that are not available for conventionally tuned sound systems. It is shown that substantial improvements in sound field uniformity and output SPL can be achieved. Different real-world case studies are presented based on systematic measurements and simulations. Important practical implementation aspects are discussed such as the spatial resolution of driven sources, the number of FIR coefficients, and the quality of loudspeaker data.

Convention Paper 8937 (Purchase now)

Thursday, October 17, 2:30 pm — 5:00 pm (Room 1E09)

Paper Session: P5 - Signal Processing—Part 2

Chair:

Juan Pablo Bello, New York University - New York, NY, USA

P5-1 Evaluation of Dynamics Processors’ Effects Using Signal Statistics—Tim Shuttleworth, Renkus Heinz - Oceanside, CA, USA

Existing methods of evaluating the action of dynamics processors, i.e., limiters, compressors, expanders, and gates do not provide results that have a direct correlation with the perceived and actual effect on the signals dynamics; aspects such as crest factor, dynamic range, and subjective acceptability of the processed signal or degree of optimization of the use of the transmission medium. A method is described that uses statistical analysis of the pre- and post-processed signal to allow the processor’s action to be characterized in a manner that correlates to the perceived effects and actual modification of signal dynamics. A number of signal statistical and user definable characteristics are introduced and, in addition to well-known statistical techniques, form the basis for this evaluation method.

Convention Paper 8938 (Purchase now)

P5-2 A New Ultra Low Delay Audio Communication Coder—Brijesh Singh Tiwari, ATC Labs - Noida, India; Midathala Harish, ATC Labs - Noida, India; Deepen Sinha, ATC Labs - Newark, NJ, USA

We propose a new full bandwidth audio codec that has algorithmic delay requirement as low as 0.67 ms to a maximum of 2.7 ms. Low delay is a critical requirement in real many time applications such as networked music performances, wireless speakers and microphones, and Bluetooth devices. The proposed Ultra Low Delay Audio Communication Codec (ULDACC) is a perceptual transform codec utilizing very small transform windows the shape of which is optimized to compensate for the lack of frequency resolution. Specially adapted psychoacoustic model and intra-frame coding techniques are employed to achieve transparent audio quality for bit rates approaching 128 kbps/channel at the algorithmic delay of about 1 ms.

Convention Paper 8939 (Purchase now)

P5-3 Cascaded Long Term Prediction of Polyphonic Signals for Low Power Decoders—Tejaswi Nanjundaswamy, University of California, Santa Barbara - Santa Barbara, CA, USA; Kenneth Rose, University of California, Santa Barbara - Santa Barbara, CA, USA

An optimized cascade of long term prediction filters, each corresponding to an individual periodic component of the polyphonic audio signal, was shown in our recent work to be highly effective as an inter-frame prediction tool for low delay audio compression. The earlier paradigm involved backward adaptive parameter estimation, and hence significantly higher decoder complexity, which is unsuitable for applications that pose a stringent power constraint on the decoder. This paper overcomes this limitation via extension to include forward adaptive parameter estimation, in two modes that trade complexity for side information: (i) a subset of parameters is sent as side information and the remaining is backward adaptively estimated; (ii) all parameters are sent as side information. We further exploit inter-frame parameter dependencies to minimize the side information rate. Objective and subjective evaluation results clearly demonstrate substantial gains and effective control of the tradeoff between rate-distortion performance and decoder complexity.

Convention Paper 8940 (Purchase now)

P5-4 Voice Coding with Opus—Koen Vos, vocTone - San Francisco, CA, USA; Karsten Vandborg Sørensen, Microsoft - Stockholm, Sweden; Søren Skak Jensen, GN Netcom A/S - Ballerup, Denmark; Jean-Marc Valin, Mozilla Corporation - Mountain View, CA, USA

In this paper we describe the voice mode of the Opus speech and audio codec. As only the decoder is standardized, the details in this paper will help anyone who wants to modify the encoder or gain a better understanding of the codec. We go through the main components that constitute the voice part of the codec, provide an overview, give insights, and discuss the design decisions made during the development. Tests have shown that Opus quality is comparable to or better than several state-of-the-art voice codecs, while covering a much broader application area than competing codecs.

Convention Paper 8941 (Purchase now)

P5-5 High-Quality, Low-Delay Music Coding in the Opus Codec—Jean-Marc Valin, Mozilla Corporation - Mountain View, CA, USA; Gregory Maxwell, Mozilla Corporation; Timothy B. Terriberry, Mozilla Corporation; Koen Vos, vocTone - San Francisco, CA, USA

The IETF recently standardized the Opus codec as RFC6716. Opus targets a wide range of real-time Internet applications by combining a linear prediction coder with a transform coder. We describe the transform coder, with particular attention to the psychoacoustic knowledge built into the format. The result out-performs existing audio codecs that don't operate under real-time constraints.

Convention Paper 8942 (Purchase now)

Thursday, October 17, 3:00 pm — 4:30 pm (1EFoyer)

Poster: P6 - Spatial Audio

P6-1 Improvement of 3-D Sound Systems by Vertical Loudspeaker Arrays—Akira Saji, University of Aizu - Aizuwakamatsu City, Japan; Keita Tanno, University of Aizu - Aizuwakamatsu, Fukushima, Japan; Jie Huang, University of Aizu - Aizuwakamatsu City, Japan

Recently we proposed a 3-D sound system using a horizontal arrangement of loudspeakers by combining the effect of HRTF and the amplitude panning method. In that system, loudspeakers are set at the height of subject's ear level and its sweet-spot is limited by the height of loudspeakers. When the listener's ear level is different from the loudspeakers, it will cause difficulty of sound localization or breakdown of sound localization. However, it is difficult to adjust properly both the height of loudspeakers and subject's ear level every time. In this paper we aimed to improve the robustness of the 3-D sound system using vertical loudspeaker arrays. As a result of experiments, we prove that the loudspeaker arrays can improve the robustness of the 3-D sound system.

Convention Paper 8944 (Purchase now)

P6-2 An Integrated Algorithm for Optimized Playback of Higher Order Ambisonics—Robert E. Davis, University of the West of Scotland - Paisley, Scotland, UK; D. Fraser Clark, University of the West of Scotland - Paisley, Scotland, UK

An algorithm is presented that gives improved playback performance of higher order ambisonic material on practical loudspeaker arrays. The optimizations are based on sound field reproduction theories with additional parameters to account for the compensation of loudspeaker and listener positioning constraints and numbers of listeners. Automatic calculation of loudspeaker distances is also achieved based on room dimensions and a gain calibration routine is incorporated. Results are given to quantify the resulting algorithm performance, informal listening tests were carried out, and aspects of implementation are discussed.

Convention Paper 8945 (Purchase now)

P6-3 I Hear NY3D: Ambisonic Capture and Reproduction of an Urban Sound Environment—Braxton Boren, New York University - New York, NY, USA; Areti Andreopoulou, New York University - New York, NY, USA; Michael Musick, New York University - New York, NY, USA; Hariharan Mohanraj, New York University - New York, NY, USA; Agnieszka Roginska, New York University - New York, NY, USA

This paper describes “I Hear NY3D,” a project for capturing and reproducing 3D soundfields in New York City. First order Ambisonic recordings of various locations in Manhattan have taken place, to be used for both aesthetic and informational purposes. The collected data allows for the creation of high quality, fully immersive auditory soundscapes that can be played back at any periphonic speaker array configuration through real time matrixing. Binaural renderings of the same data are also available for more portable applications.

Convention Paper 8946 (Purchase now)

P6-4 I Hear NY3D: An Ambisonic Installation Reproducing NYC Soundscapes—Michael Musick, New York University - New York, NY, USA; Areti Andreopoulou, New York University - New York, NY, USA; Braxton Boren, New York University - New York, NY, USA; Hariharan Mohanraj, New York University - New York, NY, USA; Agnieszka Roginska, New York University - New York, NY, USA

This paper describes the development of a reproduction installation for the "I Hear NY3D" project. This project’s aim is the capture and reproduction of immersive soundfields around Manhattan. A means of creating an engaging reproduction of these soundfields through the medium of an installation will also be discussed. The goal for this installation is an engaging, immersive experience that allows participants to create connections to the soundscapes and observe relationships between the soundscapes. This required the consideration of how to best capture and reproduce these recordings, the presentation of simultaneous multiple soundscapes, and a means of interaction with the material.

Convention Paper 8947 (Purchase now)

P6-5 Auralization of Measured Room Impulse Responses Considering Head Movements—Anthony Parks, Rensselaer Polytechnic Institute - Troy, NY, USA; Jonas Braasch, Rensselaer Polytechnic Institute - Troy, NY, USA; Samuel W. Clapp, Rensselaer Polytechnic Institute - Troy, NY, USA

The purpose of this paper is to describe a novel method for auralizing measured room impulse responses over headphones using impulse responses recorded from a 16-channel spherical microphone array decoded to eight virtual loudspeakers mixed-down binaurally using nonindividualized HRTFs. The novelty of this method lies not in the ambisonic binaural-mixdown process, but rather, the use of head pose estimation code from the Kinect API sent to a Max/MSP patch using Open Sound Control messages. This provides a fast, reliable alternative to auralizations over headphones that allow for head movements without the need for head-related transfer function interpolation by performing a rotation on the spherical harmonic that corresponds to the listener's head rotation.

Convention Paper 8948 (Purchase now)

P6-6 Reduced Representations of HRTF Datasets: A Discriminant Analysis Approach—Areti Andreopoulou, New York University - New York, NY, USA; Agnieszka Roginska, New York University - New York, NY, USA; Juan Pablo Bello, New York University - New York, NY, USA

This paper discusses reduced representations of HRTF datasets, fully descriptive of one’s personalized properties. The data reduction is achieved through elimination of the least discriminative binaural-filter pairs from a set. For this purpose Linear Discriminant Analysis (LDA) was applied on the Music and Audio Research Laboratory (MARL) database of repeated HRTF measurements, which resulted in 67% data reduction. The effectiveness of the sparse HRTF mapping is assessed by way of the performance of a database matching system, followed by a subjective evaluation study. The results indicate that participants have demonstrated strong preference towards the selected HRTF sets, in contrast to the generic KEMAR set and the least similar selections from the repository.

Convention Paper 8949 (Purchase now)

P6-7 Investigation of HRTF Sets Using Content with Limited Spatial Resolution—Johann-Markus Batke, Audio & Acoustics, Technicolor Research & Innovation - Hannover, Germany; Stefan Abeling, Audio & Acoustics, Technicolor Research & Innovation - Hannover, Germany; Stefan Balke, Leibniz Universität Hannover - Hannover, Germany; Gerald Enzner, Ruhr-Universität Bochum - Bochum, Germany

Headphone rendering of sound fields represented by Higher Order Ambisonics (HOA) is greatly facilitated by the binaural synthesis of virtual loudspeakers. Individualized head-related transfer function (HRTF) sets corresponding to the spatial positions of the virtual loudspeakers are used in conjunction with head-tracking to achieve the externalization of the sound event. We investigate the localization accuracy for HOA representations of limited spatial resolution using individualized and generic HRTF sets.

Convention Paper 8950 (Purchase now)

Thursday, October 17, 4:30 pm — 7:00 pm (Room 1E07)

Paper Session: P7 - Spatial Audio—Part 1

Chair:

Wieslaw Woszczyk, McGill University - Montreal, QC, Canada

P7-1 Reproducing Real-Life Listening Situations in the Laboratory for Testing Hearing Aids—Pauli Minnaar, Oticon A/S - Smørum, Denmark; Signe Frølund Albeck, Oticon A/S - Smørum, Denmark; Christian Stender Simonsen, Oticon A/S - Smørum, Denmark; Boris Søndersted, Oticon A/S - Smørum, Denmark; Sebastian Alex Dalgas Oakley, Oticon A/S - Smørum, Denmark; Jesper Bennedbæk, Oticon A/S - Smørum, Denmark

The main purpose of the current study was to demonstrate how a Virtual Sound Environment (VSE), consisting of 29 loudspeakers, can be used in the development of hearing aids. A listening test was done by recording everyday sound scenes with a spherical microphone array that has 32 microphone capsules. The playback in the VSE was implemented by convolving the recordings with inverse filters, which were derived by directly inverting a matrix of 928 measured transfer functions. While listening to 5 sound scenes, 10 hearing-impaired listeners could switch between hearing aid settings in real time, by interacting with a touch screen in a MUSHRA-like test. The setup proves to be very valuable for ensuring that hearing aid settings work well in real-world situations.

Convention Paper 8951 (Purchase now)

P7-2 Measuring Speech Intelligibility in Noisy Environments Reproduced with Parametric Spatial Audio—Teemu Koski, Aalto University - Espoo, Finland; Ville Sivonen, Cochlear Nordic AB - Vantaa, Finland; Ville Pulkki, Aalto University - Espoo, Finland; Technical University of Denmark - Denmark

This work introduces a method for speech intelligibility testing in reproduced sound scenes. The proposed method uses background sound scenes augmented by target speech sources and reproduced over a multichannel loudspeaker setup with time-frequency domain parametric spatial audio techniques. Subjective listening tests were performed to validate the proposed method: speech recognition thresholds (SRT) in noise were measured in a reference sound scene and in a room where the reference was reproduced by a loudspeaker setup. The listening tests showed that for normally-hearing test subjects the method provides nearly indifferent speech intelligibility compared to the real-life reference when using a nine-loudspeaker reproduction setup in anechoic conditions (<0.3 dB error in SRT). Due to the flexible technical requirements, the method is potentially applicable to clinical environments.

AES 135th Convention Student Technical Papers Award Cowinner

Convention Paper 8952 (Purchase now)

P7-3 On the Influence of Headphones on Localization of Loudspeaker Sources—Darius Satongar, University of Salford - Salford, Greater Manchester, UK; Chris Pike, BBC Research and Development - Salford, Greater Manchester, UK; University of York - Heslington, York, UK; Yiu W. Lam, University of Salford - Salford, UK; Tony Tew, University of York - York, UK

When validating systems that use headphones to synthesize virtual sound sources, a direct comparison between virtual and real sources is sometimes needed. This paper presents objective and subjective measurements of the influence of headphones on external loudspeaker sources. Objective measurements of the effect of a number of headphone models are given and analyzed using an auditory filter bank and binaural cue extraction. Objective results highlight that all of the headphones had an effect on localization cues. A subjective localization test was undertaken using one of the best performing headphones from the measurements. It was found that the presence of the headphones caused a small increase in localization error but also that the process of judging source location was different, highlighting a possible increase in the complexity of the localization task.

Convention Paper 8953 (Purchase now)

P7-4 Binaural Reproduction of 22.2 Multichannel Sound with Loudspeaker Array Frame—Kentaro Matsui, NHK Science & Technology Research Laboratories - Setagaya, Tokyo, Japan; Akio Ando, NHK Science & Technology Research Laboratories - Setagaya-ku, Tokyo, Japan

NHK has proposed a 22.2 multichannel sound system to be an audio format for future TV broadcasting. The system consists of 22 loudspeakers and 2 low frequency effect loudspeakers for reproducing three-dimensional spatial sound. To allow 22.2 multichannel sound to be reproduced in homes, various reproduction methods that use fewer loudspeakers have been investigated. This paper describes binaural reproduction of 22.2 multichannel sound with a loudspeaker array frame integrated into a flat panel display. The processing for binaural reproduction is done in the frequency domain. Methods of designing inverse filters for binaural processing with expanded multiple control points are proposed to enlarge the listening area outside the sweet spot.

Convention Paper 8954 (Purchase now)

P7-5 An Offline Binaural Converting Algorithm for 3D Audio Contents: A Comparative Approach to the Implementation Using Channels and Objects—Romain Boonen, SAE Institute Brussels - Brussels, Belgium

This paper describes and compares two offline binaural converting algorithms based on HRTFs (Head-Related Transfer Functions) for 3D audio contents. Recognizing the widespread use of headphones by the typical modern audio content consumer, two strategies to binaurally translate the 3D mixes are explored in order to give a convincing 3D aural experience "on the go." Aiming for the best output quality possible and avoiding the compromises inherent to real-time processing, the paper compares the channel- and the object-based models, notably looking respectively into the spectral analysis of channels for usage of HRTFs at intermediate positions between the virtual speakers and the dynamic convolution of the HRTFs with the objects according to their position coordinates in time.

Convention Paper 8955 (Purchase now)

Thursday, October 17, 4:30 pm — 7:00 pm (Room 1E11)

Workshop: W8 - Digital Room Correction—Does it Really Work?

Chair:Bob Katz, Digital Domain Mastering - Orlando, FL, USA

Panelists:

Ulrich Brüggemann, AudioVero - Herzebrock, Germany

Michael Chafee, Michael Chafee Enterprises - Sarasota, FL, USA

Will Eggleston, Genelec Inc. - Natick, MA, USA

Curt Hoyt, 3D Audio Consultant - Huntington Beach, CA, USA; Trinnov Audio USA Operations

Floyd Toole, Acoustical consultant to Harman, ex. Harman VP Acoustical Engineering - Oak Park, CA, USA

Abstract:

The practice of digital room and loudspeaker correction (DRC) is an especially fruitful beneficiary of Moore's law and increased skills among DSP programmers. DRC is a hot topic of interest for recording, mixing and mastering engineers, and studio designers. The workshop will explore the principles of DRC with three panelists and an expert guest.

| This session is presented in association with the AES Technical Committee on Acoustics and Sound Reinforcement and AES Technical Committee on Recording Technology and Practices |

Thursday, October 17, 5:00 pm — 7:00 pm (Room 1E15/16)

Special Event: Producing Across Generations: New Challenges, New Solutions—Making Records for Next to Nothing in the 21st Century

Moderator:Nick Sansano

Panelists:

Frank Filipetti, the living room - West Nyack, NY, USA; METAlliance

Jesse Lauter, New York, NY, USA

Carter Matschullat

Bob Power

Kaleb Rollins, Grand Staff, LLC - Brooklyn, NY, USA

Hank Shocklee

Craig Street, Independent - New York, NY, USA

Abstract:

Budgets are small, retail is dying, studios are closing, fed up audiences are taking music at will … yet devoted music professionals continue to make records for a living. How are they doing it? How are they getting paid? What type of contracts are they commanding? In a world where the “record” has become an artists’ business card, how will the producer and mixer derive participatory income? Are studio professionals being left out of the so-called 360 deals? Let’s get a quality bunch of young rising producers and a handful of seasoned vets in a room and finally open the discussion about empowerment and controlling our own destiny.

Friday, October 18, 9:00 am — 11:00 am (Room 1E11)

Game Audio: G3 - Scoring "Tomb Raider": The Music of the Game

Presenter:Alex Wilmer, Crystal Dynamics

Abstract:

"Tomb Raider's" score has been critically acclaimed as being uniquely immersive and at a level of quality on par with film. It is a truly scored experience that has raised the bar for the industry. To achieve this, new techniques in almost every part of the music's production needed to be developed. This talk will focus on the process of scoring "Tomb Raider." Every aspect will be covered from the music's creative direction, composition, implementation, and the technology behind it.

Friday, October 18, 9:00 am — 12:00 pm (Room 1E07)

Paper Session: P8 - Recording and Production

Chair:

Richard King, McGill University - Montreal, Quebec, Canada; The Centre for Interdisciplinary Research in Music Media and Technology - Montreal, Quebec, Canada

P8-1 Music Consumption Behavior of Generation Y and the Reinvention of the Recording Industry—Barry Marshall, The New England Institute of Art - Brookline, MA, USA

This paper will give an overview of the last 15 years of the recording industry’s problems with piracy and decreasing sales, while reporting on research into the music consumption behavior of a group of audio students in both the United States and in eight European countries. Audio students have a unique perspective on the issues surrounding the recording industry’s problems since the advent of Napster and the later generations of illegal file sharing. Their insights into issues like the importance of access to music, the quality of the listening experience, and the ethical quandary of participating in copyright infringement, may help point to a direction for the future of the recording industry.

Convention Paper 8956 (Purchase now)

P8-2 (Re)releasing the Beatles—Brett Barry, Syracuse University - Syracuse, NY, USA

This paper presents a comparative analysis of various Beatles releases, including original 1960s vinyl, early compact discs, and present-day digital downloads through services like iTunes. I will provide original research using source material and interviews with persons directly involved in recording and releasing Beatles albums, examining variations in dynamic range, spectral distribution, psychoacoustics, and track anomalies. Considerations are given to mastering and remastering a catalog of classics.

Convention Paper 8957 (Purchase now)

P8-3 Maximum Averaged and Peak Levels of Vocal Sound Pressure—Braxton Boren, New York University - New York, NY, USA; Agnieszka Roginska, New York University - New York, NY, USA; Brian Gill, New York University - New York, NY, USA

This work describes research on the maximum sound pressure level achievable by the spoken and sung human voice. Trained actors and singers were measured for peak and averaged SPLs at an on-axis distance of 1 m at three different subjective dynamic levels and also for two different vocal techniques (“back” and “mask” voices). The “back” sung voice was found to achieve a consistently lower SPL than the “mask” voice at a corresponding dynamic level. Some singers were able to achieve high averaged levels with both spoken and sung voice, while others produced much higher levels singing than speaking. A few of the vocalists were able to produce averaged levels above 90 dBA<, the highest found in the existing literature.

Convention Paper 8958 (Purchase now)

P8-4 Listener Adaptation in the Control Room: The Effect of Varying Acoustics on Listener Familiarization—Richard King, McGill University - Montreal, Quebec, Canada; The Centre for Interdisciplinary Research in Music Media and Technology - Montreal, Quebec, Canada; Brett Leonard, McGill University - Montreal, Quebec, Canada; The Centre for Interdisciplinary Research in Music Media and Technology - Montreal, Quebec, Canada; Scott Levine, Skywalker Sound - San Francisco, CA, USA; The Centre for Interdisciplinary Research in Music Media and Technology - Montreal, Quebec, Canada; Grzegorz Sikora, Bang & Olufsen Deutschland GmbH - Pullach, Germany

The area of auditory adaptation is of central importance to a recording engineer operating in unfamiliar or less-than-ideal acoustic conditions. This study prompts expert listeners to perform a controlled level-balancing task while exposed to three different acoustic conditions. The length of exposure is varied to test the role of adaptation on such a task. Results show that there is a significant difference in the variance of participants’ results when exposed to one condition for a longer period of time. In particular, subjects seem to most easily adapt to reflective acoustic conditions.

Convention Paper 8959 (Purchase now)

P8-5 Spectral Characteristics of Popular Commercial Recordings 1950-2010—Pedro Duarte Pestana, Catholic University of Oporto - CITAR - Oporto, Portugal; Lusíada Universityof Portugal (ILID); Centro de Estatística e Aplicacoes; Zheng Ma, Queen Mary University of London - London, UK; Joshua D. Reiss, Queen Mary University of London - London, UK; Alvaro Barbosa, Catholic University of Oporto - CITAR - Oporto, Portugal; Dawn A. A. Black, Queen Mary University of London - London, UK

In this work the long-term spectral contours of a large dataset of popular commercial recordings were analyzed. The aim was to analyze overall trends, as well as yearly and genre-specific ones. A novel method for averaging spectral distributions is proposed that yields results that are prone to comparison. With it, we found out that there is a consistent leaning toward a target equalization curve that stems from practices in the music industry but also to some extent mimics natural, acoustic spectra of ensembles.

Convention Paper 8960 (Purchase now)

P8-6 A Knowledge-Engineered Autonomous Mixing System—Brecht De Man, Queen Mary University of London - London, UK; Joshua D. Reiss, Queen Mary University of London - London, UK

In this paper a knowledge-engineered mixing engine is introduced that uses semantic mixing rules and bases mixing decisions on instrument tags as well as elementary, low-level signal features. Mixing rules are derived from practical mixing engineering textbooks. The performance of the system is compared to existing automatic mixing tools as well as human engineers by means of a listening test, and future directions are established.

Convention Paper 8961 (Purchase now)

Friday, October 18, 9:00 am — 11:30 am (Room 1E09)

Paper Session: P9 - Applications in Audio—Part I

Chair:

Sungyoung Kim, Rochester Institute of Technology - Rochester, NY, USA

P9-1 Audio Device Representation, Control, and Monitoring Using SNMP—Andrew Eales, Wellington Institute of Technology - Wellington, New Zealand; Rhodes University - Grahamstown, South Africa; Richard Foss, Rhodes University - Grahamstown, Eastern Cape, South Africa

The Simple Network Management Protocol (SNMP) is widely used to configure and monitor networked devices. The architecture of complex audio devices can be elegantly represented using SNMP tables. Carefully considered table indexing schemes support a logical device model that can be accessed using standard SNMP commands. This paper examines the use of SNMP tables to represent the architecture of audio devices. A representational scheme that uses table indexes to provide direct-access to context-sensitive SNMP data objects is presented. The monitoring of parameter values and the implementation of connection management using SNMP are also discussed.

Convention Paper 8962 (Purchase now)

P9-2 IP Audio in the Real-World; Pitfalls and Practical Solutions Encountered and Implemented when Rolling Out the Redundant Streaming Approach to IP Audio—Kevin Campbell, WorldCast Systems /APT - Belfast, N Ireland; Miami, Florida

This paper will review the development of IP audio links for audio delivery and chiefly look at the possibility of harnessing the flexibility and cost-effectiveness of the public internet for professional audio delivery. We will discuss first the benefits of IP audio when measured against traditional synchronous audio delivery and also the typical problems associated with delivering real-time broadcast audio across packetized networks, specifically in the context of unmanaged IP networks. The paper contains an examination of some techniques employed to overcome these issues with an in-depth look at the redundant packet streaming approach.

Convention Paper 8963 (Purchase now)

P9-3 Implementation of AES-64 Connection Management for Ethernet Audio/Video Bridging Devices—James Dibley, Rhodes University - Grahamstown, South Africa; Richard Foss, Rhodes University - Grahamstown, Eastern Cape, South Africa

AES-64 is a standard for the discovery, enumeration, connection management, and control of multimedia network devices. This paper describes the implementation of an AES-64 protocol stack and control application on devices that support the IEEE Ethernet Audio/Video Bridging standards for streaming multimedia, enabling connection management of network audio streams.

Convention Paper 8964 (Purchase now)

P9-4 Simultaneous Acquisition of a Massive Number of Audio Channels through Optical Means—Gabriel Pablo Nava, NTT Communication Science Laboratories - Kanagawa, Japan; Yutaka Kamamoto, NTT Communication Science Laboratories - Kanagawa, Japan; Takashi G. Sato, NTT Communication Science Laboratories - Kanagawa, Japan; Yoshifumi Shiraki, NTT Communication Science Laboratories - Kanagawa, Japan; Noboru Harada, NTT Communicatin Science Labs - Atsugi-shi, Kanagawa-ken, Japan; Takehiro Moriya, NTT Communicatin Science Labs - Atsugi-shi, Kanagawa-ken, Japan

Sensing sound fields at multiple locations often may become considerably time consuming and expensive when large wired sensor arrays are involved. Although several techniques have been developed to reduce the number of necessary sensors, less work has been reported on efficient techniques to acquire the data from all the sensors. This paper introduces an optical system, based on the concept of visible light communication, which allows the simultaneous acquisition of audio signals from a massive number of channels via arrays of light emitting diodes (LEDs) and a high speed camera. Similar approaches use LEDs to express the sound pressure of steady state fields as a scaled luminous intensity. The proposed sensor units, in contrast, transmit optically the actual digital audio signal sampled by the microphone in real time. Experiments to illustrate two examples of typical applications are presented: a remote acoustic imaging sensor array and a spot beamforming based on the compressive sampling theory. Implementation issues are also addressed to discuss the potential scalability of the system.

Convention Paper 8965 (Purchase now)

P9-5 Blind Microphone Analysis and Stable Tone Phase Analysis for Audio Tampering Detection—Luca Cuccovillo, Fraunhofer Institute for Digital Media Technology IDMT - Ilmenau, Germany; Sebastian Mann, Fraunhofer Institute for Digital Media Technology IDMT - Ilmenau, Germany; Patrick Aichroth, Fraunhofer Institute for Digital Media Technology IDMT - Ilmenau, Germany; Marco Tagliasacchi, Politecnico di Milano - Milan, Italy; Christian Dittmar, Fraunhofer Institute for Digital Media Technology IDMT - Ilmenau, Germany

In this paper we present an audio tampering detection method based on the combination of blind microphone analysis and phase analysis of stable tones, e.g., the electrical network frequency (ENF). The proposed algorithm uses phase analysis to detect segments that might have been tampered. Afterwards, the segments are further analyzed using a feature vector able to discriminate among different microphone types. Using this combined approach, it is possible to achieve a significantly lower false-positive rate and higher reliability as compared to standalone phase analysis.

Convention Paper 8966 (Purchase now)

Friday, October 18, 10:30 am — 12:00 pm (Room 1E13)

Workshop: W12 - FX Design Panel: Equalization

Chair:Francis Rumsey, Logophon Ltd. - Oxfordshire, UK

Panelists:

Nir Averbuch, Sound Radix Ltd. - Israel

George Massenburg, Schulich School of Music, McGill University - Montreal, Quebec, Canada

Saul Walker, New York University - New York, NY, USA

Abstract:

Meet the designers whose talents and philosophies are reflected in the products they create, driving sound quality, ease of use, reliability, price, and all the other attributes that motivate us to patch, click and tweak their effects processors.

Friday, October 18, 11:00 am — 12:00 pm (Room 1E15/16)

Special Event: Friday Keynote: The Current and Future Direction of the Recording Process from an Artist, Engineer, and Producer’s Perspective

Presenter:Jimmy Jam, Flyte Tyme Productions

Abstract:

Five-time GRAMMY Award winner Jimmy Jam is a renowned songwriter, record producer, musician, entrepreneur, and half of the most influential and successful writing/producing duo in modern music history. Since forming their company Flyte Tyme Productions in 1982, Jam and partner Terry Lewis have collaborated with such diverse and legendary artists as Janet Jackson, Mary J. Blige, Gwen Stefani, Robert Palmer, Mariah Carey, Boyz II Men, Rod Stewart, Yolanda Adams, Sting, Heather Headley, Usher, Celine Dion, Kanye West, Chaka Khan, Trey Songz, and Michael Jackson, among others. Jimmy and Terry have written and/or produced over 100 albums and singles that have reached gold, platinum, multi-platinum, or diamond status, including 26 No. 1 R&B and 16 No. 1 pop hits, giving the pair more Billboard No. 1's than any other duo in chart history. Jimmy Jam’s Lunchtime Keynote address will focus on the current and future direction of the recording process from various perspectives. As a songwriter, artist, engineer, and producer, Jimmy is uniquely qualified to give a bird’s-eye view of how each of these “personalities” interact and contribute to the overall final product, and along the way, how technology has evolved and what it has meant to his craft. In Jimmy’s words, “Of course it all starts with a great song, but then, it's important to consider how and what technology should be used to capture that creativity. It’s that intersection between the technology and creativity that I have always looked at every day throughout my career. Ultimately, it’s my job as a artist/producer to have those two elements meet and not crash. And that’s when you're using the available technology to capture the artist in their purest form.”

Friday, October 18, 12:00 pm — 1:00 pm (Room 1E13)

Workshop: W13 - Microphone and Recording Techniques for the Music Ensembles of the United States Military Academy

Chair:Brandie Lane, United States Military Academy Band - West Point, NY, USA

Panelist:

Joseph Skinner, United States Army, West Point Band - West Point, NY, USA

Abstract:

The United States Military Academy is home to the oldest active duty military band. Our mission is to provide world-class music to educate, train, and inspire the Corps of Cadets and to serve as ambassadors of the United States Military Academy to the local, national, and international communities. This workshop will discuss advanced microphone and recording techniques (stereo and multi-track) used to capture the different elements of the West Point Band including the Concert Band, Jazz Knights, and Field Music group in a recording/studio or live sound reinforcement setting. The recording of other USMA musical elements including the Cadet Glee Club and Cadet Pipes and Drums will also be discussed.

Friday, October 18, 12:45 pm — 2:15 pm (Room 1E15/16)

Special Event: From the Motor City to Broadway: Making "Motown The Musical" Cast Album

Moderator:Harry Weinger, Universal Music Enterprises (UMe) - New York, NY, USA; New York University - New York, NY, USA

Panelists:

Frank Filipetti, the living room - West Nyack, NY, USA; METAlliance

Jawan Jackson, Motown The Musical - New York, NY, USA

Ethan Popp, Special Guest Music Productions, LLC - New York, NY, USA

Abstract:

Tracing the path taken by pop-R&B classics known the world over to the Broadway stage and the modern-day recording studio—and how cast albums get made with no time and no do-overs.

A panel and Q&A with album producer and mixer Frank Filipetti, a multi-Grammy Award winner, and co-producer Ethan Popp, the show's Tony-nominated musical supervisor.

Moderator: Harry Weinger, VP of A&R at UMe, two-time Grammy winner and album Executive Producer.

Friday, October 18, 1:15 pm — 2:15 pm (Room 1E11)

Special Event: Lunchtime Keynote: On the Transmigration of Souls

Presenter:Michael Bishop, Five/Four Productions, Ltd.

Abstract:

"On the Transmigration of Souls," is a multi-Grammy winning work for orchestra, chorus, children’s choir, and pre-recorded tape is a composition by composer John Adams. It was commissioned by the New York Philharmonic and Lincoln Center’s Great Performers and Mr. Adams received the 2003 Pulitzer Prize in music for the piece. Its premiere recording received the 2005 Grammy Award for Best Classical Album, Best Orchestral Performance, and Best Classical Contemporary Composition and the 2009 Grammy Award for Best Surround Sound Album. Surround Recording Engineer, Michael Bishop, will discuss the surround production process and play the work in its entirety.

Friday, October 18, 2:00 pm — 2:30 pm (Room 1E07)

Paper Session: P10 - Amplifiers

Chair:

Alexander Voishvillo, JBL/Harman Professional - Northridge, CA, USA

P10-1 Supply-Feedback Fully-Digital Class D Audio Amplifier Featuring 100 dBA+ SNR and 0.5 W to 1 W Selectable Output Power—Rossella Bassoli, ST-Ericsson - Monza Brianza, Italy; Federico Guanziroli, ST-Ericsson - Monza Brianza, Italy; Carlo Crippa, ST-Ericsson - Monza Brianza, Italy; Germano Nicollini, ST-Ericsson - Monza Brianza, Italy

This paper presents a real-time power supply noise correction technique in a fully-digital class D audio amplifier. The power supply is scaled and applied to a 12-bits Nyquist ADC to modify the amplitude of the Pulse-Width-Modulator reference carrier. An improved supply extrapolation algorithm results to a power supply rejection from one to two orders of magnitude higher than reported implementations. Class D sensitivity to clock jitter is presented. SNR higher than 100dBA have been measured in the presence of both power supply ripple and clock jitter. The PWM and output stage are integrated in the same chip in a 0.13µm digital CMOS technology, whereas an external ADC has been used to demonstrate the validity of the supply-feedback algorithm.

Convention Paper 8968 (Purchase now)

Friday, October 18, 2:15 pm — 4:45 pm (Room 1E09)

Paper Session: P11 - Perception—Part 1

Chair:

Jason Corey, University of Michigan - Ann Arbor, MI, USA

P11-1 On the Perceptual Advantage of Stereo Subwoofer Systems in Live Sound Reinforcement—Adam J. Hill, University of Derby - Derby, Derbyshire, UK; Malcolm O. J. Hawksford, University of Essex - Colchester, Essex, UK

Recent research into low-frequency sound-source localization confirms the lowest localizable frequency is a function of room dimensions, source/listener location, and reverberant characteristics of the space. Larger spaces therefore facilitate accurate low-frequency localization and should gain benefit from broadband multichannel live-sound reproduction compared to the current trend of deriving an auxiliary mono signal for the subwoofers. This study explores whether the monophonic approach is a significant limit to perceptual quality and if stereo subwoofer systems can create a superior soundscape. The investigation combines binaural measurements and a series of listening tests to compare mono and stereo subwoofer systems when used within a typical left/right configuration.

Convention Paper 8970 (Purchase now)

P11-2 Auditory Adaptation to Loudspeakers and Listening Room Acoustics—Cleopatra Pike, University of Surrey - Guildford, Surrey, UK; Tim Brookes, University of Surrey - Guildford, Surrey, UK; Russell Mason, University of Surrey - Guildford, Surrey, UK

Timbral qualities of loudspeakers and rooms are often compared in listening tests involving short listening periods. Outside the laboratory, listening occurs over a longer time course. In a study by Olive et al. (1995) smaller timbral differences between loudspeakers and between rooms were reported when comparisons were made over shorter versus longer time periods. This is a form of timbral adaptation, a decrease in sensitivity to timbre over time. The current study confirms this adaptation and establishes that it is not due to response bias but may be due to timbral memory, specific mechanisms compensating for transmission channel acoustics, or attentional factors. Modifications to listening tests may be required where tests need to be representative of listening outside of the laboratory.

Convention Paper 8971 (Purchase now)

P11-3 Perception Testing: Spatial Acuity—P. Nigel Brown, Ex'pression College for Digital Arts - Emeryville, CA, USA

There is a lack of readily accessible data in the public domain detailing individual spatial aural acuity. Introducing new tests of aural perception, this document specifies testing methodologies and apparatus, with example test results and analyses. Tests are presented to measure the resolution of a subject's perception and their ability to localize a sound source. The basic tests are designed to measure minimum discernible change across a 180° horizontal soundfield. More complex tests are conducted over two or three axes for pantophonic or periphonic analysis. Example results are shown from tests including unilateral and bilateral hearing aid users and profoundly monaural subjects. Examples are provided of the applicability of the findings to sound art, healthcare, and other disciplines.

Convention Paper 8972 (Purchase now)

P11-4 Evaluation of Loudness Meters Using Parameterization of Fader Movements—Jon Allan, Luleå University of Technology - Piteå, Sweden; Jan Berg, Luleå University of Technology - Piteå, Sweden

The EBU recommendation R 128 regarding loudness normalization is now generally accepted and countries in Europe are adopting the new recommendation. There is now a need to know more about how and when to use the different meter modes, Momentary and Short term, proposed in R 128, as well as to understand how different implementations of R 128 in audio level meters affect the engineers’ actions. A method is tentatively proposed for evaluating the performance of audio level meters in live broadcasts. The method was used to evaluate different meter implementations, three of them conforming to the recommendation from EBU, R 128. In an experiment, engineers adjusted audio levels in a simulated live broadcast show and the resulting fader movements were recorded. The movements were parameterized into “Fader movement,” “Adjustment time,” “Overshoot,” etc. Results show that the proposed parameters produced significant differences caused by the meters and that the experience of the engineer operating the fader is a significant factor.

Convention Paper 8973 (Purchase now)

P11-5 Validation of the Binaural Room Scanning Method for Cinema Audio Research—Linda A. Gedemer, University of Salford - Salford, UK; Harman International - Northridge, CA, USA; Todd Welti, Harman International - Northridge, CA, USA

Binaural Room Scanning (BRS) is a method of capturing a binaural representation of a room using a dummy head with binaural microphones in the ears and later reproducing it over a pair of calibrated headphones. In this method multiple measurements are made at differing head angles that are stored separately as data files. A playback system employing headphones and a headtracker recreates the original environment for the listener, so that as they turn their head, the rendered audio during playback matches the listeners' current head angle. This paper reports the results of a validation test of a custom BRS system that was developed for research and evaluation of different loudspeakers and different listening spaces. To validate the performance of the BRS system, listening evaluations of different in-room equalizations of a 5.1 loudspeaker system were made both in situ and via the BRS system. This was repeated using three different loudspeaker systems in three different sized listening rooms.

Convention Paper 8974 (Purchase now)

Friday, October 18, 3:00 pm — 4:30 pm (1EFoyer)

Poster: P12 - Signal Processing

P12-1 Temporal Synchronization for Audio Watermarking Using Reference Patterns in the Time-Frequency Domain—Tobias Bliem, Fraunhofer Institute for Integrated Circuits IIS - Erlangen, Germany; Juliane Borsum, Fraunhofer Institute for Integrated Circuits IIS - Erlangen, Germany; Giovanni Del Galdo, Fraunhofer Institute for Integrated Circuits IIS - Erlangen, Germany; Stefan Krägeloh, Fraunhofer Institute for Integrated Circuits IIS - Erlangen, Germany

Temporal synchronization is an important part of any audio watermarking system that involves an analog audio signal transmission. We propose a synchronization method based on the insertion of two-dimensional reference patterns in the time-frequency domain. The synchronization patterns consist of a combination of orthogonal sequences and are continuously embedded along with the transmitted data, so that the information capacity of the watermark is not affected. We investigate the relation between synchronization robustness and payload robustness and show that the length of the synchronization pattern can be used to tune a trade-off between synchronization robustness and the probability of false positive watermark decodings. Interpreting the two-dimensional binary patterns as one-dimensional N-ary sequences, we derive a bond for the autocorrelation properties of these sequences to facilitate an exhaustive search for good patterns.

Convention Paper 8975 (Purchase now)

P12-2 Sound Source Separation Using Interaural Intensity Difference in Real Environments—Chan Jun Chun, Gwangju Institute of Science and Technology (GIST) - Gwangju, Korea; Hong Kook Kim, Gwangju Institute of Science and Tech (GIST) - Gwangju, Korea

In this paper, a sound source separation method is proposed by using the interaural intensity difference (IID) of stereo audio signal recorded in real environments. First, in order to improve the channel separability, a minimum variance distortionless response (MVDR) beamformer is employed to increase the intensity difference between stereo channels. Then, IID between stereo channels processed by the beamformer is computed and applied to sound source separation. The performance of the proposed sound source separation method is evaluated on the stereo audio source separation evaluation campaign (SASSEC) measures. It is shown from the evaluation that the proposed method outperforms a sound source separation method without applying a beamformer.

Convention Paper 8976 (Purchase now)

P12-3 Reverberation and Dereverberation Effect on Byzantine Chants—Alexandros Tsilfidis, accusonus, Patras Innovation Hub - Patras, Greece; Charalampos Papadakos, University of Patras - Patras, Greece; Elias Kokkinis, accusonus - Patras, Greece; Georgios Chryssochoidis, National and Kapodistrian University of Athens - Athens, Greece; Dimitrios Delviniotis, National and Kapodistrian University of Athens - Athens, Greece; Georgios Kouroupetroglou, National and Kapodistrian University of Athens - Athens, Greece; John Mourjopoulos, University of Patras - Patras, Greece

Byzantine music is typically monophonic and is characterized by (i) prolonged music phrases and (ii) Byzantine scales that often contain intervals smaller than the Western semitone. As happens with most religious music genres, reverberation is a key element of Byzantine music. Byzantine churches/cathedrals are usually characterized by particularly diffuse fields and very long Reverberation Time (RT) values. In the first part of this work, the perceptual effect of long reverberation on Byzantine music excerpts is investigated. Then, a case where Byzantine music is recorded in non-ideal acoustic conditions is considered. In such scenarios, a sound engineer might require to add artificial reverb on the recordings. Here it is suggested that the step of adding extra reverberation can be preceded by a dereverberation processing to suppress the originally recorded non ideal reverberation. Therefore, in the second part of the paper a subjective test is presented that evaluates the above sound engineering scenario.

Convention Paper 8977 (Purchase now)

P12-4 Cepstrum-Based Preprocessing for Howling Detection in Speech Applications—Renhua Peng, Chinese Academy of Sciences - Beijing, China; Chinese Academy of Sciences - Shanghai, China; Jian Li, Chinese Academy of Sciences - Beijing, China; Chinese Academy of Sciences - Shanghai, China; Chengshi Zheng, Chinese Academy of Sciences - Beijing, China; Chinese Academy of Sciences - Shanghai, China; Xiaoliang Chen, Chinese Academy of Sciences - Beijing, China; Chinese Academy of Sciences - Shanghai, China; Xiaodong Li, Chinese Academy of Sciences - Beijing, China; Chinese Academy of Sciences - Shanghai, China

Conventional howling detection algorithms exhibit dramatic performance degradations in the presence of harmonic components of speech that have the similar properties with the howling components. To solve this problem, this paper proposes a cepstrum preprocessing-based howling detection algorithm. First, the impact of howling components on cepstral coefficients is studied in both theory and simulation. Second, according to the theoretical results, the cepstrum pre-processing-based howling detection algorithm is proposed. The Receiver Operating Characteristic (ROC) simulation results indicate that the proposed algorithm can increase the detection probability at the same false alarm rate. Objective measurements, such as Speech Distortion (SD) and Maximum Stable Gain (MSG), further confirm the validity of the proposed algorithm.

Convention Paper 8978 (Purchase now)

P12-5 Delayless Method to Suppress Transient Noise Using Speech Properties and Spectral Coherence—Chengshi Zheng, Chinese Academy of Sciences - Beijing, China; Chinese Academy of Sciences - Shanghai, China; Xiaoliang Chen, Chinese Academy of Sciences - Beijing, China; Chinese Academy of Sciences - Shanghai, China; Shiwei Wang, Chinese Academy of Sciences - Beijing, China; Chinese Academy of Sciences - Shanghai, China; Renhua Peng, Chinese Academy of Sciences - Beijing, China; Chinese Academy of Sciences - Shanghai, China; Xiaodong Li, Chinese Academy of Sciences - Beijing, China; Chinese Academy of Sciences - Shanghai, China

This paper proposes a novel delayless transient noise reduction method that is based on speech properties and spectral coherence. The proposed method has three stages. First, the transient noise components are detected in each subband by using energy-normalized variance. Second, we apply the harmonic property of the voiced speech and the continuity of the speech signal to reduce speech distortion in voiced speech segments. Third, we define a new spectral coherence to distinguish the unvoiced speech from the transient noise to avoid suppressing the unvoiced speech. Compared with those existing methods, the proposed method is computationally efficient and casual. Experimental results show that the proposed algorithm can effectively suppress transient noise up to 30 dB without introducing audible speech distortion.

Convention Paper 8979 (Purchase now)

P12-6 Artificial Stereo Extension Based on Hidden Markov Model for the Incorporation of Non-Stationary Energy Trajectory—Nam In Park, Gwangju Institute of Science and Technology (GIST) - Gwangju, Korea; Kwang Myung Jeon, Gwangju Institute of Science and Technology (GIST) - Gwangju, Korea; Seung Ho Choi, Prof., Seoul National University of Science and Technology - Seoul, Korea; Hong Kook Kim, Gwangju Institute of Science and Tech (GIST) - Gwangju, Korea

In this paper an artificial stereo extension method is proposed to provide stereophonic sound from mono sound. While frame-independent artificial stereo extension methods, such as Gaussian mixture model (GMM)-based extension, do not consider the correlation of energies of previous frames, the proposed stereo extension method employs a minimum mean-squared error estimator based on a hidden Markov model (HMM) for the incorporation of non-stationary energy trajectory. The performance of the proposed stereo extension method is evaluated by a multiple stimuli with a hidden reference and anchor (MUSHRA) test. It is shown from the statistical analysis of the MUSHRA test results that the stereo signals extended by the proposed stereo extension method have significantly better quality than those of a GMM-based stereo extension method.

Convention Paper 8980 (Purchase now)

P12-7 Simulation of an Analog Circuit of a Wah Pedal: A Port-Hamiltonian Approach—Antoine Falaize-Skrzek, IRCAM - Paris, France; Thomas Hélie, IRCAM-CNRS UMR 9912-UPMC - Paris, France

Several methods are available to simulate electronic circuits. However, for nonlinear circuits, the stability guarantee is not straightforward. In this paper the approach of the so-called "Port-Hamiltonian Systems" (PHS) is considered. This framework naturally preserves the energetic behavior of elementary components and the power exchanges between them. This guarantees the passivity of the (source-free part of the) circuit.

Convention Paper 8981 (Purchase now)

P12-8 Improvement in Parametric High-Band Audio Coding by Controlling Temporal Envelope with Phase Parameter—Kijun Kim, Kwangwoon University - Seoul, Korea; Kihyun Choo, Samsung Electronics Co., Ltd. - Suwon, Korea; Eunmi Oh, Samsung Electronics Co., Ltd. - Suwon, Korea; Hochong Park, Kwangwoon University - Seoul, Korea

This study proposes a method to improve temporal envelope control in parametric high-band audio coding. Conventional parametric high-band coders may have difficulties with controlling fine high-band temporal envelope, which can cause the deterioration in sound quality for certain audio signals. In this study a novel method is designed to control temporal envelope using spectral phase as an additional parameter. The objective and the subjective evaluations suggest that the proposed method should improve the quality of sound with severely degraded temporal envelope by the conventional method.

Convention Paper 8982 (Purchase now)

Friday, October 18, 5:00 pm — 6:30 pm (Room 1E15/16)

Special Event: Inside Abbey Road 1967—Photos from the Sgt. Pepper Sessions

Moderator:Allan Kozinn, NY Times - New York, NY, USA

Panelists:

Henry Grossman

Brian Kehew, CurveBender Publishing - Los Angeles, CA, USA

Abstract:

Allan Kozinn, noted Beatles expert and reviewer for the NY Times will moderate this panel, which shows a behind-the-scenes look at EMI/Abbey Road studios during the making of the landmark "Sgt. Pepper's Lonely Hearts Club Band." Famed Beatles photographer Henry Grossman visited the sessions where he took several hundred photos, many of which are still largely unseen. Henry will show photos and share memories of that creative era. Brian Kehew (co-author of the acclaimed Recording the Beatles book) will illustrate key technical aspects found in Grossman's photos. (Henry Grossman is also the author of Kaleidoscope Eyes: A Day in the Life of Sgt. Pepper and Places I Remember: My Time with The Beatles, considered two of the greatest collections of Beatles photography to date.)

Friday, October 18, 5:00 pm — 6:30 pm (Room 1E13)

Historical: The Art of Recording the Big Band, Revisited

Presenter:Robert Auld, Auldworks - New York, NY, USA

Abstract: